Google Search Console Guide 2026, complete and updated

Google Search Console is the only official, reliable and free SEO tool. In this post you will find all the keys you need to navigate the tool, as well as the best tips from our SEO specialists that you may not know yet.

Very often, when we get started with SEO we rush to try several external tools and forget that we can get a huge amount of value from the best one of all. Let’s take a look:

What you will find in this guide

- 1. What is Google Search Console

- 2. How Google Search Console works

- 3. Types of property in Search Console

- 4. Basic Google Search Console settings

- 5. Sections of the tool

- 5.1. Performance reports

- 5.2. URL inspection tool

- 5.3. Search Console Insights

- 5.4. Index coverage reports

- 5.5. Experience

- 5.6. Shopping

- 5.7. Enhancements

- 5.8. Settings => Crawl stats

- 5.9. Settings => Robots.txt status

- 5.10. Links

- 5.11. Google Search Console during domain migrations

- 5.12. (Deprecated) Limit crawl rate

- 5.13. Disavow links tool

1. What is Google Search Console

It is a free tool provided by Google that helps us understand how our website is crawled, indexed and ranked in the search engine. It is the most powerful SEO tool and it is free. Google Search Console (GSC, formerly Google Webmaster Tools), besides analysing our site, gives us improvement recommendations. It will always be more reliable than any third-party external tool.

2. How Google Search Console works

The tool only works for verified site owners, which means your competitors will never be able to use it to spy on your SEO data. You must sign up here https://search.google.com/search-console/about and verify your property using different methods, but all of them require access to the site’s domain, hosting or CMS. After verification, the data is pulled from Google’s servers into the interface.

Regarding the data you will see in GSC, keep in mind:

- the data is not in real time; it used to have a delay of 2–3 days, but since 2025 it already shows data from the last 24 hours (with a few hours of lag)

- it does not show all the available data (especially for large sites) but rather the essential information from a specific sample of URLs

- it goes a little beyond standard Google Search, as it also provides data from Google News and Discover, and can be expanded to other Google products

- it offers data from recent months (generally the last 16 months); if we want longer historical series we must export the data, use the API or external tools that allow this

3. Types of property in Search Console

Basically there are two types of properties in GSC: domain properties and URL-prefix properties.

In the first case, we verify all protocol versions of a domain and its subdomains. This is the most interesting option, but we will not always have permissions to do it (essentially, access to the domain). It is especially recommended for large projects working with different subdomains and protocols. If we use a single domain and all versions are redirected to one protocol variant (for example https://), a URL-prefix property for that exact URL will be enough.

tip: if you work with a domain that has separate language folders (for example example.com, example.com/es and example.com/en), register them separately so that you can analyse their rankings in a segmented way.

In the past it was recommended to also register the version without www or without https, but if you already have everything correctly redirected (double-check it), it is no longer necessary.

4. Basic Google Search Console settings

Once your property is verified, here are some configuration tips that will help you get the most out of it:

- Link Search Console to Google Analytics. Even though it sounds surprising, these two tools are not connected by default, and it is extremely useful to import and export data both ways. In the settings you will find the steps to link them. If you have a YouTube channel or Google Ads, it is also highly recommended to connect them.

- If you work with a domain with different language folders (for example example.com, example.com/es and example.com/en), register them separately so you can analyse their rankings in a segmented way, as in our example:

- If you have more than one subdomain and you have verified the property at domain level, that does not mean you should not also register each subdomain as its own property in order to get reports broken down by subdomain.

- Turn on email notifications. Although there is a dedicated alerts section, you can easily miss some very important messages. To be safe, go to user settings => email preferences and make sure to enable email alerts:

5. Sections of the tool

We include this section with the caveat that the menu changes constantly, so we will focus on the main items:

- Overview: summary of the main data the tool provides. It includes a recommendation section, although at the moment these are closer to random alerts and are rarely truly useful.

- URL inspection: paste a URL and you will know in real time what information Google has about it (when it was last crawled and whether it is indexed, among other relevant details).

- Performance: the results we are getting today from the SEO channel: impressions and clicks, all of it filterable and segmentable (country, URL, device, Google property, etc.).

- Indexing: the bible for any technical SEO. We can see which URLs are crawled, which are indexed, which are not, and in most cases we will know why.

- Experience: these reports show information about what happens once users land on your page (security, loading speed, mobile friendliness, etc.).

- User settings: here you can configure your preferences for email notifications (turn everything on or off, or only some types). You can also decide whether Search Console results appear directly in the Google interface for users who have access.

- Shopping: for e-commerce, Google tells us whether it is receiving the information it needs about our products. This is very important because it enables, for example, visibility in Google Shopping. As usual, red items are critical issues, yellow ones are recommendations, but in this case they deserve a careful look.

- Enhancements: in these sections you can see how you are implementing important structured data such as breadcrumbs, FAQ, logos or AMP pages, among others.

- Security & manual actions: manual actions is one of the most feared reports for any SEO, webmaster or CEO. In 99.9% of cases you will see “No issues detected”. If you see messages here, that is bad news: a human reviewer has detected a violation of Google’s guidelines. In the Security section you will find warnings about vulnerabilities that Google has detected on your site, usually when the site has been hacked.

- Legacy tools and reports: the prehistoric area. They are not available for domain properties.

- Links: useful information about external links pointing to your site and your internal linking.

- Settings: configuration items, permissions, exports, associations with other products and crawl statistics.

- Send feedback: you can send tickets to Google’s team if you want them to review some aspect of the tool or your reports, attaching screenshots if needed.

- Achievements: an attempt at gamification by Google, still in its early stages. It sets organic traffic goals for your property, congratulates you when you reach them and then sets a new target. Not particularly exciting if you do not measure SEO purely in tonnes of clicks.

Next we will look in more detail at some of the most important internal reports and tools:

5.1. Performance reports

In the short term, this is our main scoreboard, because it tells us what we are actually ranking for, and it is our best proof of results for the client (assuming the numbers are good).

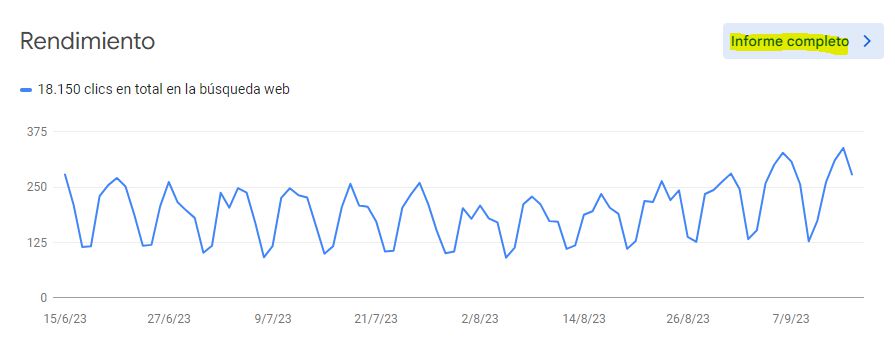

By default, it shows the evolution of SEO clicks over the last months, but if we click “Full report” we get into the heart of Search Console:

hint: looking at the previous example, you might think this site has stable traffic. Nothing could be further from the truth: this project is in good health and is actually growing. What happens is that it is a B2B website and, as a result, traffic drops sharply at weekends. All B2B projects should have a similar pattern; if they do not, it is a sign that something is off.

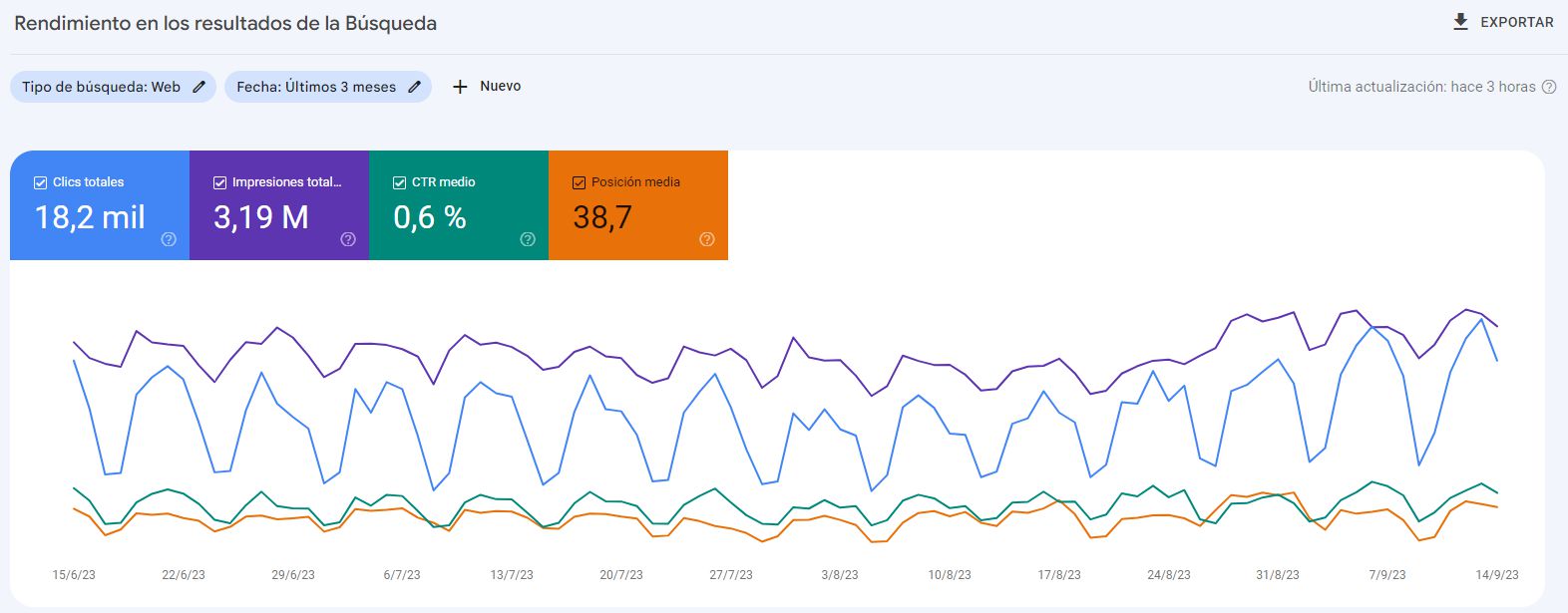

Once you are inside the report, you will find:

- top right, the question-mark icon tells you when data was last updated

- slightly above, there is a button to export the data

- in blue, you see the evolution of organic clicks

- in purple, you see total impressions (how many times your results were shown on the SERPs)

- in green, you see the average CTR, i.e. the percentage of impressions that resulted in a click

- in orange, you see the average position in the SERPs and how it has changed

- there is a date selector that lets you choose different periods (never more than 16 months) and compare against previous periods or the previous year

- there is a search type selector. The default is Web search, but for some projects it may be very interesting to analyse Image, Video or News search

- under + New you will find extra filters, constantly being expanded. We especially recommend country filters and device filters, which can provide very valuable information

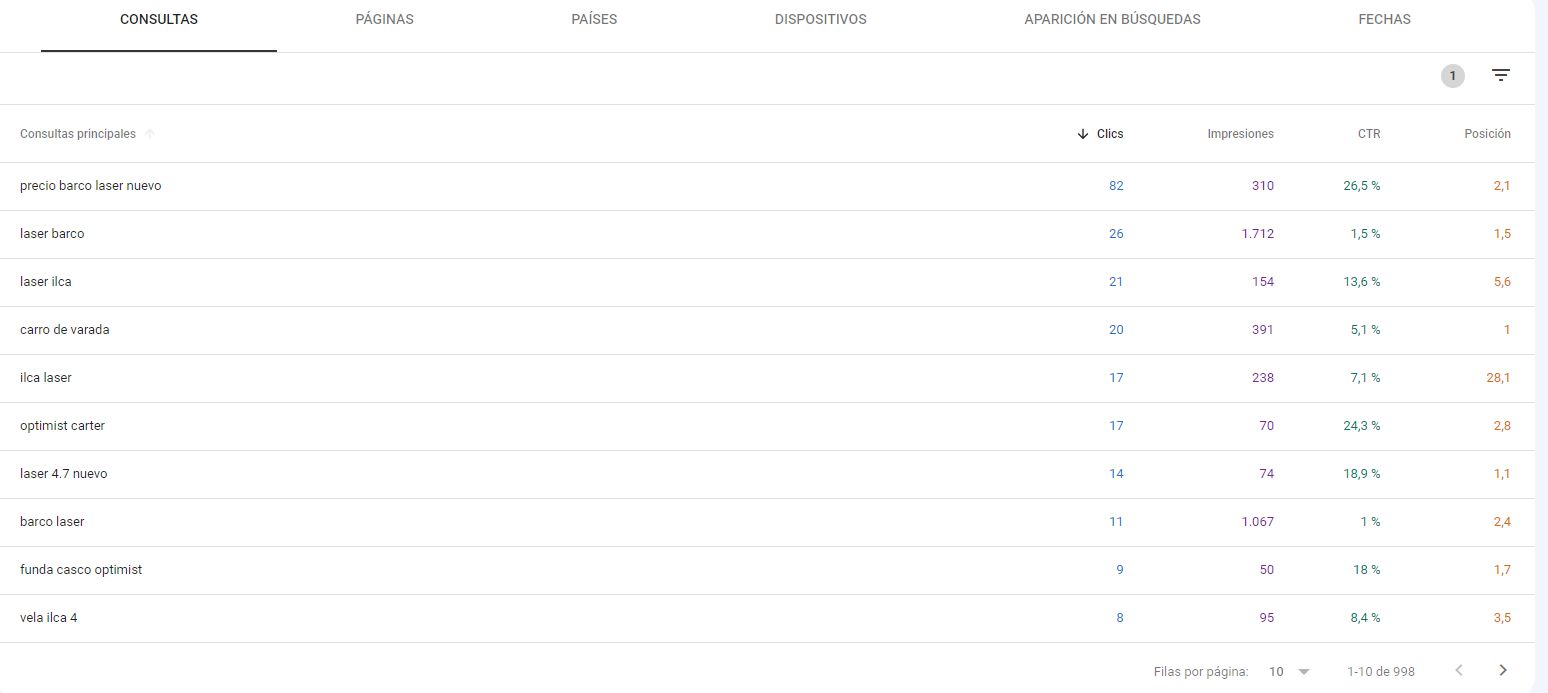

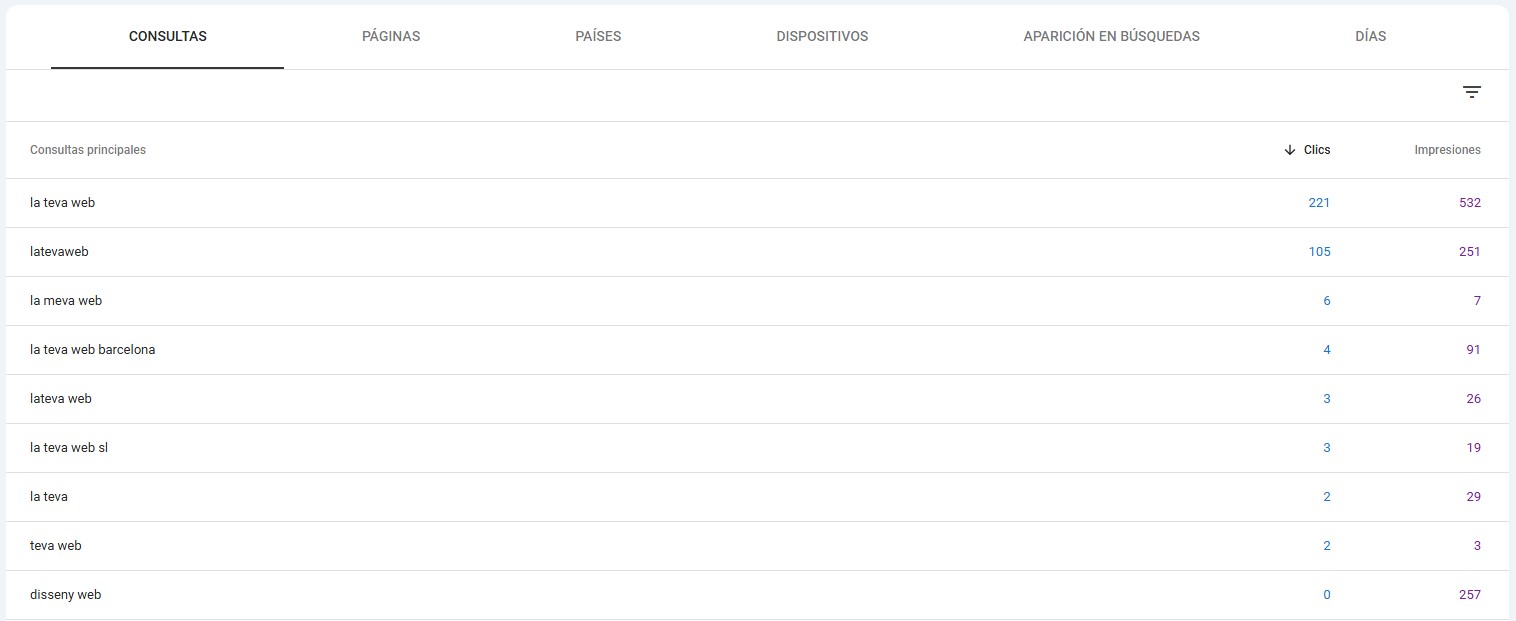

At the bottom we have the queries table. By default we see the queries that bring the most SEO traffic, but from there you should explore and play with filters. This will let you analyse the performance of keywords you already had on your radar, but you will also find unexpected SEO opportunities. Pay special attention to keywords with many impressions but few clicks: there is an opportunity here, because improving your average position could capture more traffic.

The secondary tabs allow you to view, filter or cross data using different dimensions: landing pages, countries, devices and more. In 2025, Google introduced improvements in how filters and navigation between reports work. Now, filters applied to one report remain active when you switch to another, and there is a button to reset all filters with a single click.

Depending on the type of site and content you offer, in Performance you may also see additional tabs to analyse how you appear in Google News or Discover, which is especially important for publishers and media. They follow the same structure and philosophy as the main report:

5.2. URL inspection tool

If we want to know what Google thinks of a specific URL on our site, we need to paste it into the main search bar at the top, which is increasingly prominent from a UX standpoint:

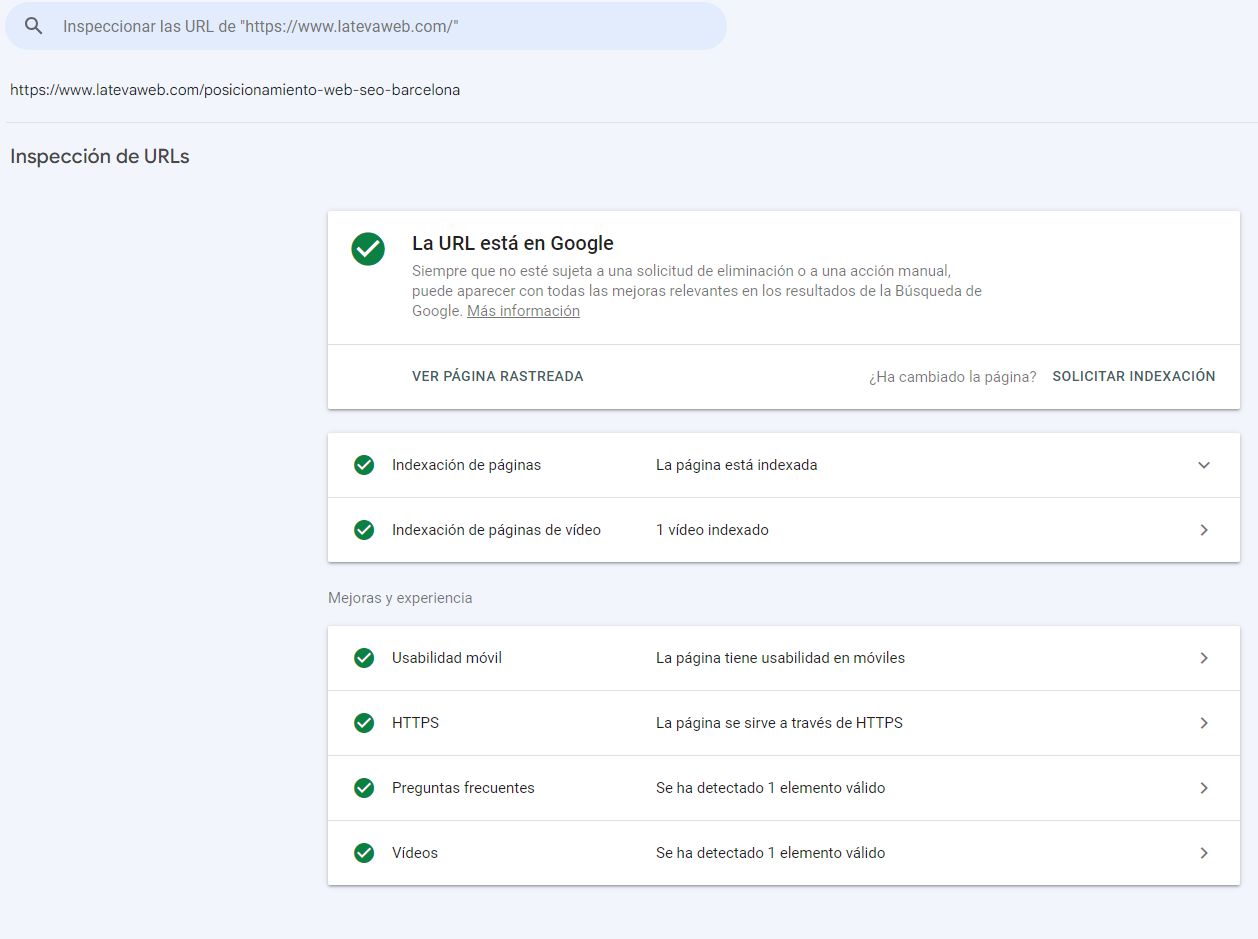

Let’s illustrate this with an example from our own site. We get the following information:

- URL is on Google: this page has been crawled and is in Google’s index, so it can appear in search results.

- View crawled page: especially useful when there is an issue with a URL, this feature lets us see what Google actually saw, either as raw HTML or as a screenshot. We can detect cases where Google cannot access certain content despite it being visible to users.

- Request indexing: this button should be used sparingly, but it is very handy. For URLs that are correct but not yet indexed, or URLs that are indexed but have undergone major changes that the bot has not yet taken into account (check the last crawl date), you can submit them for priority crawling. Google will take a closer look, but remember: this is a request, not a command, and it does not guarantee immediate crawling.

- Page indexing: this section shows that our page is indexed. Clicking it reveals more useful information, such as how Google first found the URL and when it was last crawled. This is especially helpful when a URL is not indexed, as it can provide clues as to why, or when we have updated a URL and want to know whether those changes have been processed.

- Other: depending on the content and characteristics of the URL, Google will also show what it detects there. In our example, it detected an SSL certificate, mobile friendliness and, via structured data, it recognised that the page contains a video and FAQ content.

In the URL inspection report we can click on “View tested page” and analyse what Google sees both at code level and visually. This is especially useful for websites that rely heavily on JavaScript.

5.3. Statistics (formerly Search Console Insights)

This is a tool that originally lived outside the main Search Console interface but has been gaining importance and is now integrated. In the Overview you will see a prominent link to it.

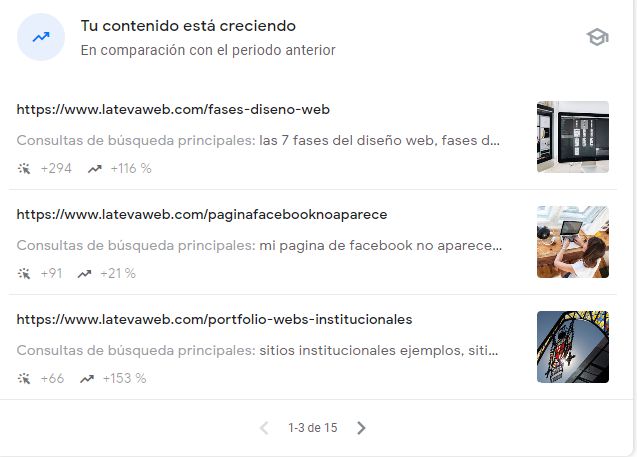

To use it, you must have GSC and Analytics linked. What does it do? Essentially, it shows data that we could obtain from the Performance section but only after a fair amount of filtering and digging. Search Console Insights instead gives us these pre-digested, easy-to-read reports.

This tool helps us identify the content that has been performing best organically over the last 3 months:

In 2026 the tool improved significantly and now offers a different angle and more data that can help us: the main improvement is that previously it only showed what was going well, while now it also highlights what is going wrong: pages and keywords that are losing traffic, turning it into a very interesting SEO alert system.

Among the most recent improvements to the Statistics tool, the following stand out:

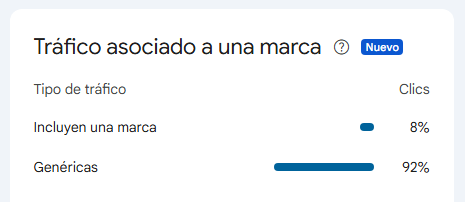

Brand filters

Now you can specifically filter and analyze branded queries separately from generic ones. This allows you to:

- Measure your brand awareness and recognition

- Understand what percentage of your traffic comes from branded searches vs. discovery

- Identify opportunities in non-branded searches that may require more content work

Keywords grouped by themes

Google automatically groups queries by thematic categories, making it easier to:

- Detect which topics generate the most traffic

- Identify emerging topics with potential

- Prioritize content areas based on their actual performance

These features make the Statistics tool even more useful for strategic decision-making in your SEO content strategy.

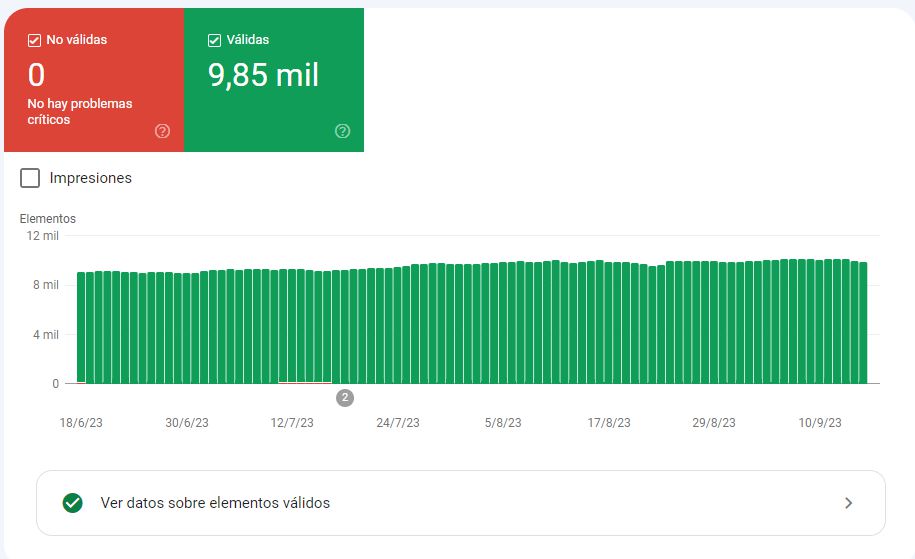

5.4. Index coverage reports

People who approach SEO only from a business and content perspective could stop here and not go any further. We already have a sense of the countries, topics and URLs we are ranking for and can gather ideas for future content actions. But if you want to go deeper, let’s continue.

In the index coverage reports we see information about how Google discovers, crawls and indexes (or doesn’t index) our pages. There are several sub-sections.

Page indexing

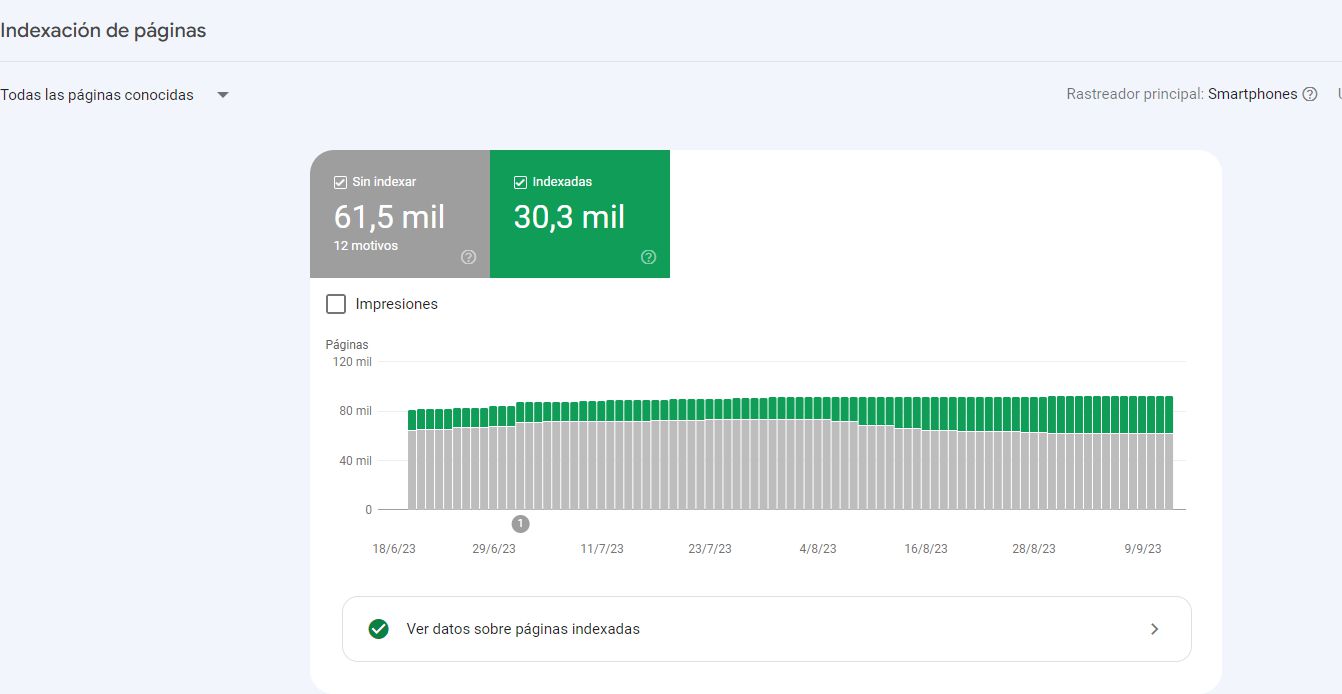

This report shows the total number of pages Google knows about and how many of them it has actually indexed (in green).

Look at the chart above: this project has more than 90,000 URLs, but Google has only indexed 30,000, around a third. Is that good or bad? It depends, because we do not know which URLs we really wanted to rank, and we may have many combination or filter URLs that are correctly configured not to be indexed.

In other posts we have explained what a sitemap is and what it should contain. Assuming the sitemap is correctly implemented and includes all the URLs you want to rank, apply that filter:

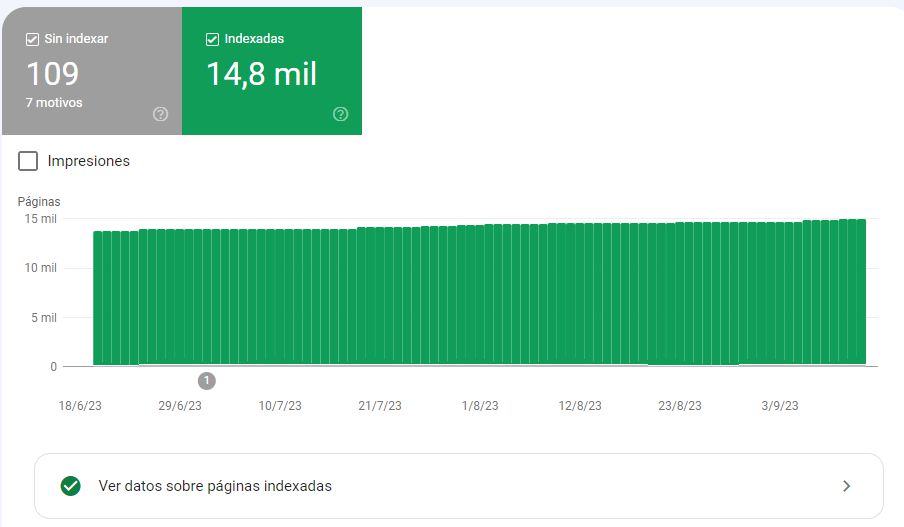

We get something like this:

It turns out that through the sitemaps I am sending roughly 15,000 pages, but twice as many end up indexed. What happened? We can obtain the inverse report, “Only not submitted pages”. In our case these are URLs with paginations, parameters and other variations that are not in the sitemap but are being indexed. We will need to take action through internal linking, canonicals or robots.

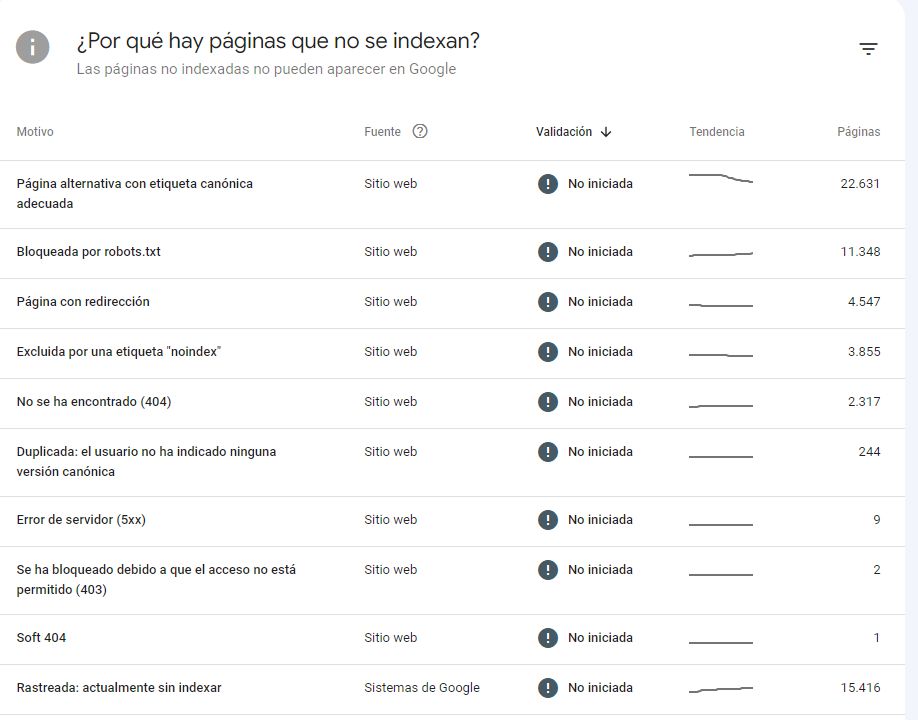

Why are there pages that are not indexed? There can be many reasons and, in most cases, it is perfectly fine for them to remain unindexed.

In each case Google shows you the reason, the trend and how many pages are affected. Once you have identified and fixed a specific issue type, you can ask Google to validate the fix:

Once we are looking at a list, it is a good idea to analyse a sample of URLs. For each page we know its URL, when it was last crawled, and we have the option to open the page or inspect the URL, where we will generally find the explanation:

Below is a summary of some of the most common issues you may encounter. The current format can be a bit confusing, especially when clients look at it, because genuine errors appear side by side with things that are not errors or are not always critical:

- Alternate page with proper canonical tag: not an error. This URL is not indexed because we ourselves told Google, via a canonical tag, that another URL is the preferred version. Still, double-check you have not overdone it.

- Blocked by robots.txt: if the URL is blocked by robots.txt, Google cannot crawl it and therefore cannot index it.

- Page with redirect: in 99% of cases this is not an error. It is serious only if you see the warning Redirect error.

- Soft 404: this is a worrying one. Google crawled a page that technically returns a 200 code, but it looks to the bot like an error page or an empty page. If the page is legitimate, you may need to add real content and then submit it for validation. If it is truly an error page, you need to configure it to return a proper 404.

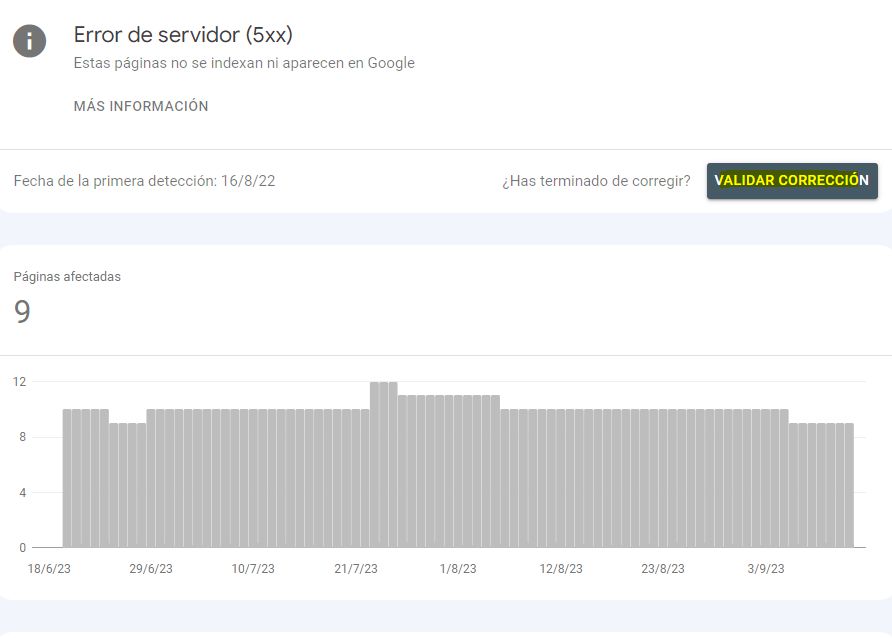

- Server error (5xx): this is severe. Probably, when Google tried to crawl the URL, your site was down. Check that the URLs are now working and send them for validation. You should also talk to your hosting provider to see whether these outages are frequent, because you may have overloaded resources and need to upgrade.

- Excluded by ‘noindex’ tag: if a page has a noindex tag, it will not be indexed. Make sure this is intentional and, to make Google’s life easier, avoid including URLs with noindex in your sitemap.

If you want to go deeper into these topics, check our post “How to fix 5xx and 4xx errors thanks to Google Search Console”

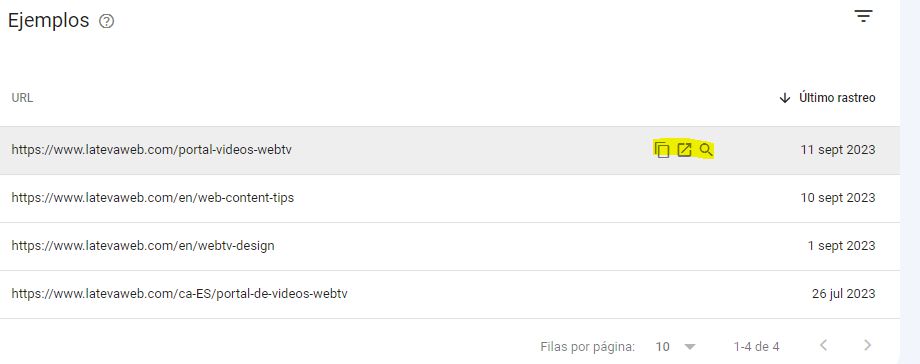

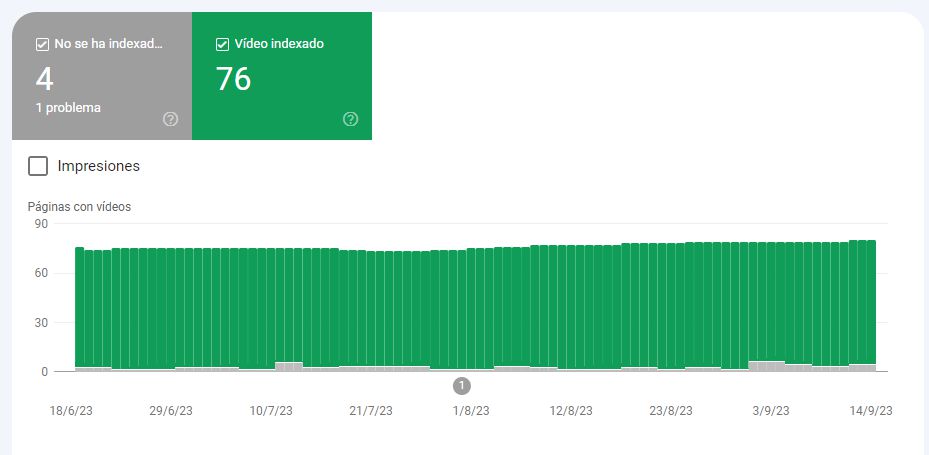

Pages with videos

This second index report only appears if you have embedded videos on your site. It shows the list of videos detected by Google on your site and which of them are indexed:

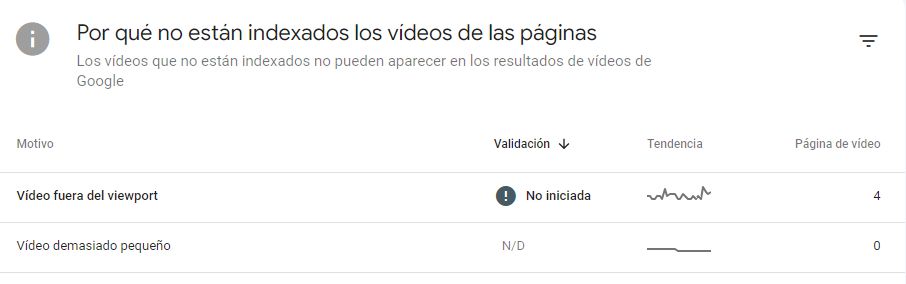

Only videos on indexed pages are considered. In our example, Google has detected 80 videos but has indexed only 76. Note: it will only consider indexing a video if it is important within the page content, not if it is just a side element. And if there is more than one video, it will only consider the first. You will see the reason for non-indexing in GSC:

The most common issue is “Video outside the viewport”. If you want the video to be indexed, you should move it higher on the page so it becomes more prominent.

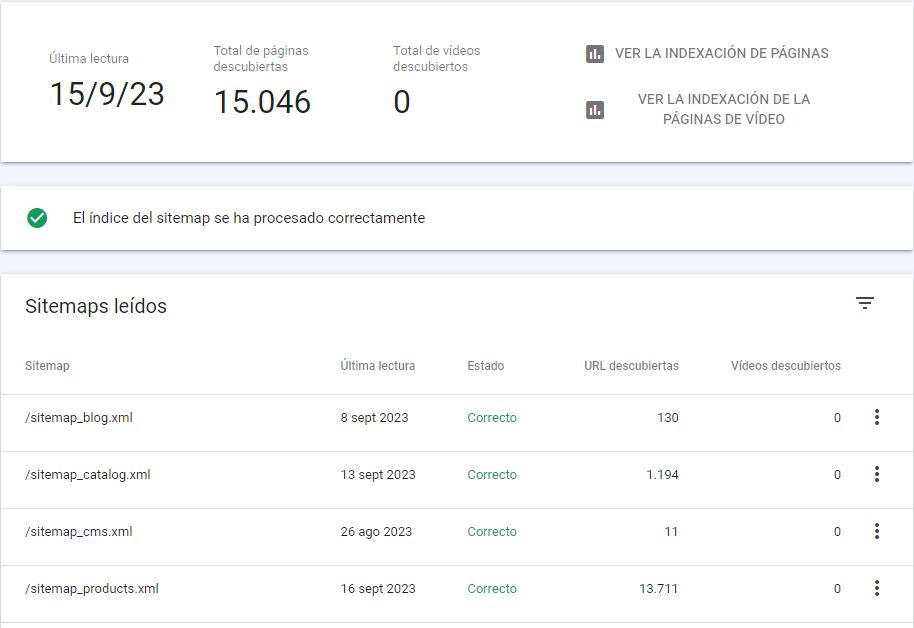

Sitemaps

This is one of the most important sections, especially for large sites. Submitting your sitemaps should be one of the very first steps when setting up a new GSC property. From this tab we can submit sitemaps to Google, check that they are processed correctly and, after a few days, start seeing data related to the URLs included in them.

This report provides useful information:

- last read date for each sitemap

- discovered pages

- read status

- discovered URLs

If we click “View page indexing” we see indexation data related only to the URLs included in that sitemap.

tip: whenever the technology allows it, it is a good idea to split sitemaps based on the type of content, as we show in the example. And if your site is multilingual, it is ideal to separate them by language or language-country combinations, so you can analyse performance by segment.

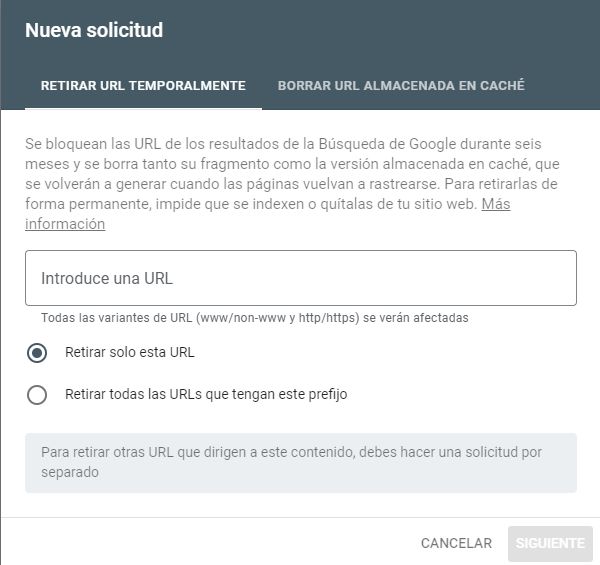

Removals

This tab requires advanced knowledge, because it is very delicate. Misuse can wipe out an entire website from Google. It is the section most often used when the site has been hacked with injected spam URLs, for instance. You should only use it in extreme cases, when you have URLs that are causing serious problems (for example copyright issues or malicious content).

The tool lets you remove a specific URL or groups of URLs that share a prefix (for example, web.com/blog):

The “Clear cached URL” tab is for URLs that you do not want to remove entirely, but where there is an error that you want to fix (for example a big spelling mistake or a wrong price). Google will remove the last cached version and, when it crawls the URL again, it will pick up the updated content.

To the right you will see the “Outdated content” tab. If a page or image is no longer available on your site but is still appearing on the SERP, or if it has been updated and Google is still showing the old version, you can request that it be refreshed. If the request is approved, the image or page will be removed from Google (if it no longer exists) or the search result will be updated (if it has changed).

Finally, the “SafeSearch filtering” tab is used to tell Google when content is intended for adults.

tip: keep in mind that removals are temporary. If we do not also take action on the site itself (delete content, change response codes, block with robots.txt, etc.), these URLs will eventually reappear and we will be reliving Groundhog Day.

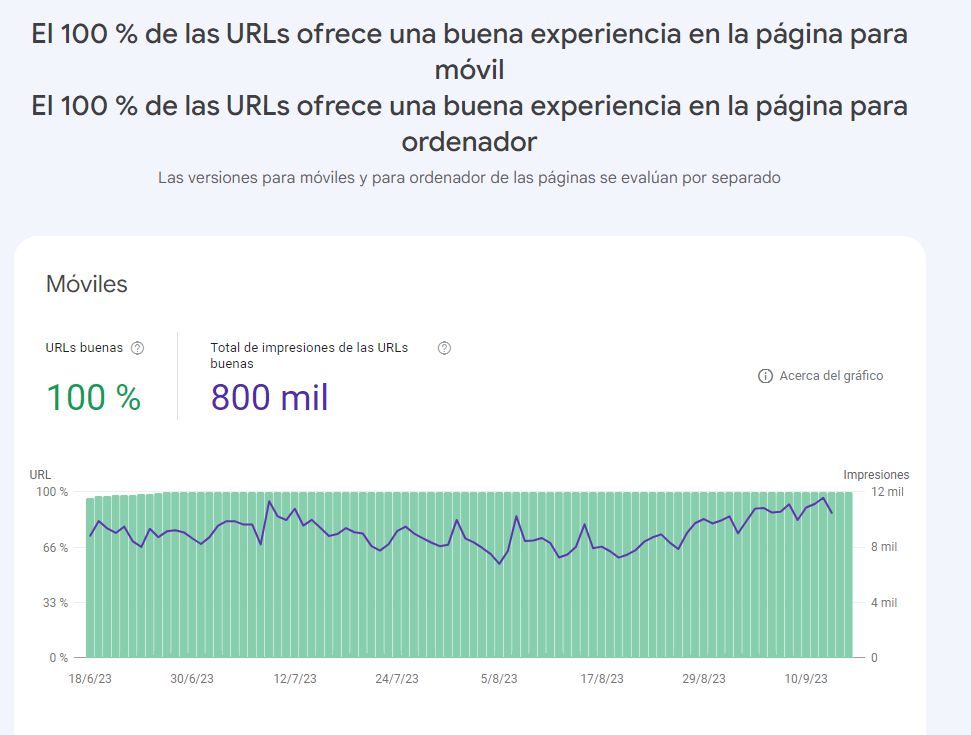

5.5. Experience

These reports used to be among the most visited by technical SEOs and clients, and they created many problems and misunderstandings. The intention was good (to evaluate mobile usability and performance on both mobile and desktop), but their use and misuse led to a lot of confusion. For that reason, Google has announced various changes, and we will just summarise.

The ideal scenario is to have all three main experience reports in green (your site is mobile-friendly, passes Core Web Vitals, and user experience is good across all URLs on both desktop and mobile). Unfortunately, this will not always be possible and you should weigh the cost of improvement against the benefit. In any case, this is somewhat outside pure SEO and should be handled via a dedicated WPO audit.

The future of experience reports in GSC

In the article linked above you can see where Google is taking these reports:

- it will only include links to general guidelines about page experience

- the individual reports “Core Web Vitals” and “HTTPS” will remain in Search Console

- the “Mobile usability” report, the mobile-friendly test tool and the Mobile-Friendly Test API are being retired

Overall, there will be far less page experience information in Search Console and only the most serious issues will surface. If you want to work on these aspects, you will have to rely on other tools such as Lighthouse.

5.6. Shopping

These reports should only appear on GSC properties for e-commerce sites. If you do not run an online store and still see them, it is likely a mistake. If you do have an e-commerce, this section is extremely important. In the past, most of the relevant information about products in Google was found in Google Merchant Center. While that is still a very useful tool, Google is gradually providing more insights directly in GSC.

In this view we can see the products Google has detected and how many it considers valid and therefore eligible to appear in Google. It is important to mark up products correctly according to Schema guidelines in your structured data, but also to send them via a product feed in Merchant Center. That way we give Google the full list of products with their URLs, images, stock, brand and so on. With this markup, our products can appear as rich results in classic blue links, but also in Images and Google Shopping, Lens or YouTube, among others.

If Google does not receive the essential information properly, those issues will be flagged in red and you should fix them urgently. Once that is done, you will see a list of enhancements. These appear in yellow and are not strictly required, but they are the sort of details Google appreciates in order to understand your product better and ultimately rank it more effectively:

In the example, Google recommends that we provide information about shipping policies, return policies, and that we specify the brand for many products that currently have none, among other things.

5.7. Enhancements

This report can contain several tabs related to the content of our site, essentially linked to structured data markup. These are some of the most common ones:

- Breadcrumbs: checks whether our breadcrumb navigation is properly marked up.

- AMP: although Google no longer strongly promotes AMP formats, if you use them it is crucial that they comply with Google’s AMP requirements. If not, you will see errors here.

- Logos: it is always advisable to tell Google which logo represents your brand, ideally using the Organization schema or another appropriate schema type.

- Review snippets: if you use reviews and want Google to treat them as such, they must be correctly marked up and come from providers that Google trusts. Self-serving, made-up reviews are not acceptable.

- Videos: here you will see Google’s suggestions regarding structured data on video content. Fields such as upload date or thumbnail images are essential for Google and any missing items will be flagged.

- Profile pages: in the age of EEAT it is very important for content to have a clear author and for that author to have a dedicated page with a bio, credentials, content list, etc. All of this needs to be marked up with dedicated schema. If the markup is incomplete or incorrect, you will see alerts here.

5.8. Settings => Crawl stats

Hidden inside the Settings section (still a strange choice), there is a real gem. It provides a kind of log report of how Google’s bots have interacted with our site over the last 90 days.

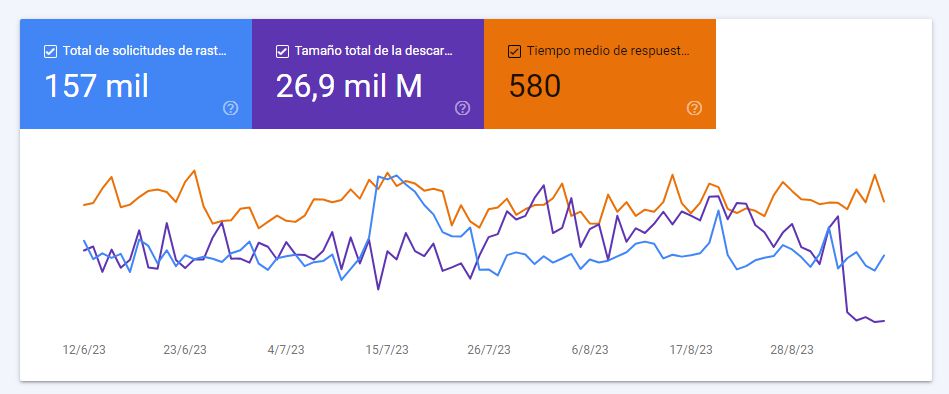

Some key parts:

We can see how many crawl requests our site receives, the total amount of downloaded content and the average response time of the server. Any optimisation we do at server level will have a direct positive impact here. In the example, a WPO improvement was implemented and you can clearly see the effect in the reduced download size.

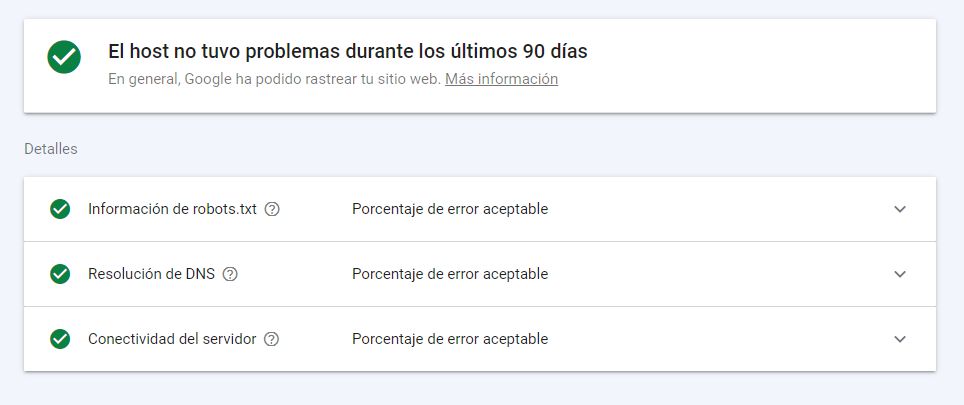

The Host status section shows whether Google has been able to access our site and robots.txt file correctly in the last 90 days. If this is not perfect, fixing it must be a high priority.

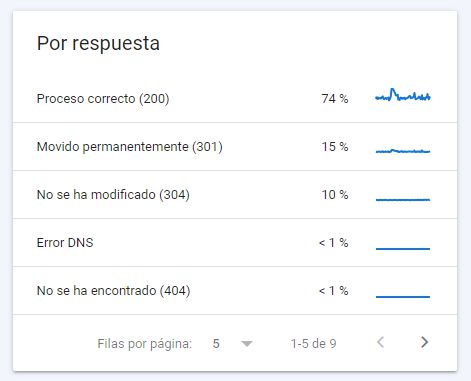

The second graph shows the response codes the bot has received. DNS errors and 5xx errors are particularly critical. And obviously, the more 200 responses, the better, since that means you are using your crawl budget efficiently. 4xx and 3xx codes are not necessarily bad, but if we can reduce them, even better.

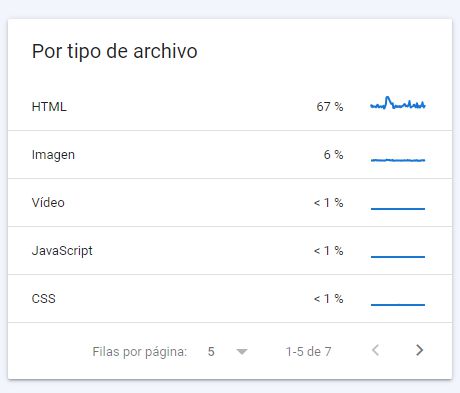

The third report shows the types of files Google encounters. For a typical website, most of them will be HTML, but it is normal to see JS, CSS, image files, PDFs, etc.

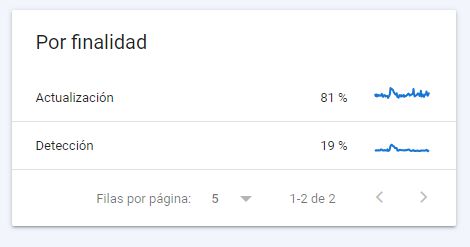

In the Purpose report, Google tells us whether the URLs crawled in recent days were new URLs (discovery) or whether it was re-crawling existing URLs to check for changes (refresh).

The By Googlebot type report gives us clues about which specific Googlebots are visiting our site. Today, most projects are primarily crawled by the Smartphone Googlebot, but depending on your content and your audience, other bots may also appear. You should keep this in mind and make sure your site is optimised accordingly. Update: Google has announced that this report will be removed from Search Console following the rollout of mobile-first indexing.

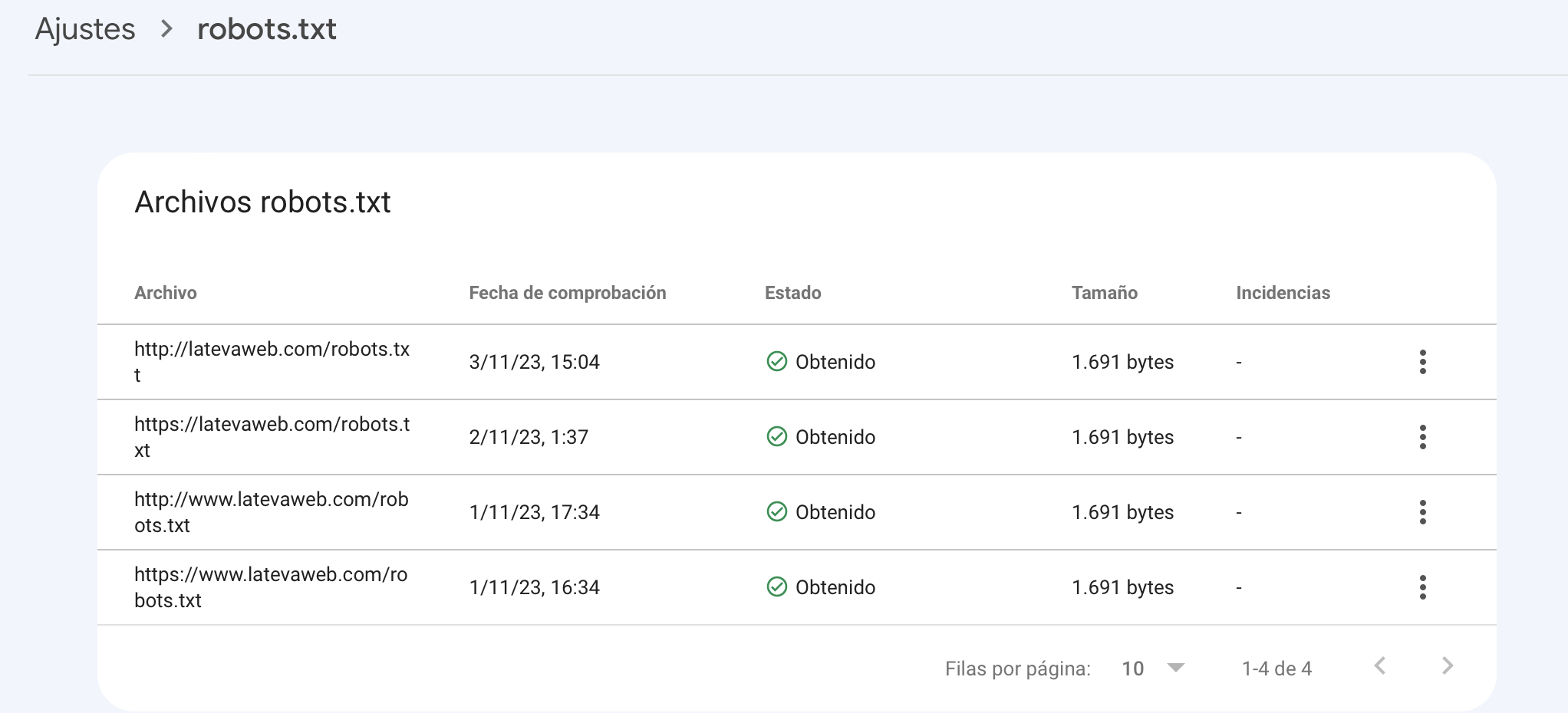

5.9. Settings => Robots.txt status

This section was introduced in November 2023. It is a place where we can check the status of our site’s robots.txt file and how Google has processed it.

In the first view, we see the file path, the last check date, whether it was retrieved correctly, the file size and any issues:

Clicking the three dots next to the last crawl, if we have made significant changes, we can request a re-crawl. We can also click to see the processed robots.txt file. At first glance this seems useful, but in practice it is mostly a read-only report. We also believe anything related to robots.txt should be reserved for advanced users (which is not always the case for whoever is looking at GSC). In addition, this new section came at the cost of losing a very popular tool: the old robots.txt tester, which is now gone. Overall the trade-off is negative, because Search Console now only tells us whether the file is retrieved correctly, but we can no longer test whether a specific URL is allowed or blocked; for that we must rely on external tools.

5.10. Links

In this key section we get information about how other websites link to us and how we handle internal linking. It provides four reports, the first three related to external links:

- Top linked pages: URLs on your site that receive the most external links.

- Top linking sites: domains that send the most links to your site. You should identify any that send hundreds or thousands of links, as they may be harmful.

- Top linking text: the most common anchor texts used in external links. In a healthy profile, most of them should be brand-related; if you see many exact-match keyword anchors, you are taking on risk.

- Internal links: Google analyses how your own URLs link to each other and provides very useful insights. The URLs with the most internal links should ideally be your SEO priority pages; if that is not the case, you know you have work to do on internal linking and site architecture.

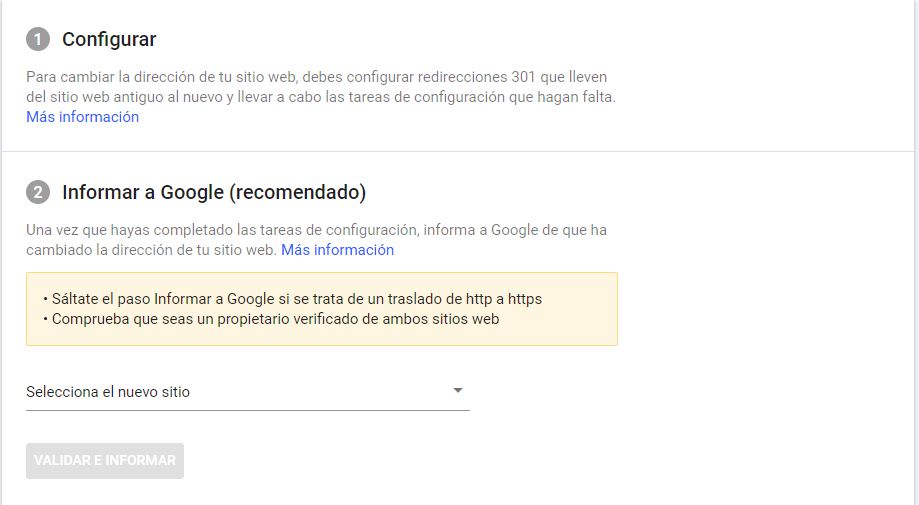

5.11. Google Search Console during domain migrations

If your site is going to change domain, this is one of the mandatory steps in an SEO migration. To do it properly, you must:

- have GSC properties for both the old and the new domains

- configure 301 redirects

- then follow the steps under Settings => Change of address

5.12. (Deprecated) Limit crawl rate

There used to be a (very well hidden) option to limit how often Google could crawl your site:

In 99% of cases this was never used, except when the bot was causing serious issues and only for very short, specific periods (flash campaigns, Black Friday, etc.). Because of that, and because Google’s own automated crawl management has improved, Google has announced the sunset of the Crawl Rate Limiter tool for January 2024.

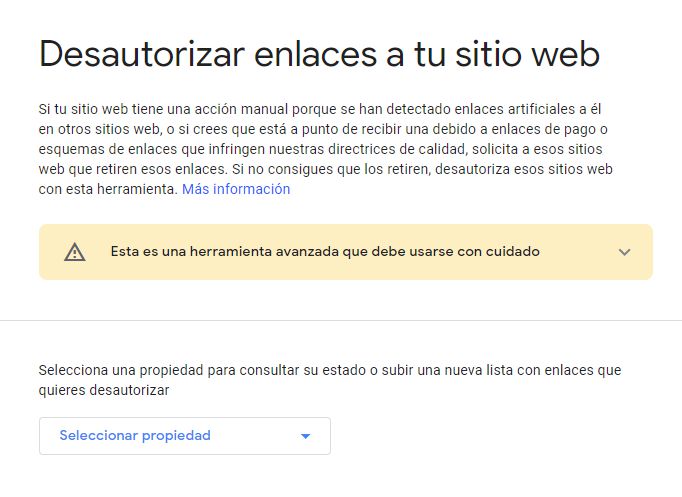

5.13. Disavow links tool

This is another deliberately hidden tool. It lets us upload a file listing links or entire domains whose links we want to disavow. In other words, we have detected links from spammy sites and we do not want Google to take them into account. If you are interested in this topic, check our post about Google’s Disavow tool.

Related news

Hello! drop us a line

Tips to get the most out of the only fully reliable SEO tool, provided directly by Google itself.